Applications are getting bigger.

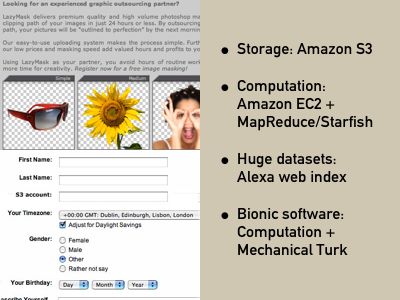

Both commodity storage (as Amazon S3, a per-GB storage facility with web services access) and computation (as Amazon EC2, letting you start up, grow and monitor a server farm programatically) are coming on-stream.

Consider a future youtube, something that requires a lot of storage (well, and if you used lots of clever algorithms, lots of computation too). There would be no need for you, as an individual web app developer, to deal with hardware and server farms—or even, importantly, a business model. Imagine if, when making their profile on your new website, a user had to enter their S3 account code, and pay for their online storage through some billing arrangement they managed themselves. That’d be a lot easier. (That’s the second image in the slide.)

To be really compelling, Amazon needs to expose the billing sides of S3 and EC2 as web services.

To take advantage of the computation used to take really smart algorithms, such as Google’s MapReduce. This is now being pushed into the mainstream with implementations such as StarFish for Ruby, a client-server distributed computing library. Then also there’s a rise of functional languages such as Haskell which, being side-effect free, allows even more parallelism. We need the scripting revolution for functional languages, sure, but it’s not so far off.

What can we do with this massive computation and storage? We can deal with huge datasets, such as Alexa’s web search platform (that’s also from Amazon—I’m spotting a pattern here). Building a commodity Google is really not that far away.

We can also use really complex algorithms… or we can just fake it. Instead of applying computing algorithms, Google are channelling their human traffic through Image Labeler to tag all the images on the web (read more). And then there’s the generalisation of this, Amazon’s Mechanical Turk, artifical artifical intelligence, accessed, again, programmatically.

These smarts have, on the O’Reilly Radar blog, been dubbed bionic software. It true, who cares whether the smarts behind this Image nudity detection web API is human or machine, so long as it works? (The top-left image is also potentially bionic, but I’ll discuss it later.)

What will we do with drop-in business models for scaling in storage and server capacity, the ability to deal with huge datasets, and the application of bionics? Really, I’ve only the tiniest idea.

But I know that Amazon have got all the parts, and we’re only a code library and a tweak or two away from having the grass-roots developers creating applications that today require a small team and a load of funding.

Matt Webb, S&W, posted 2006-09-21 (talks on 2006-09-03, 2006-09-17)